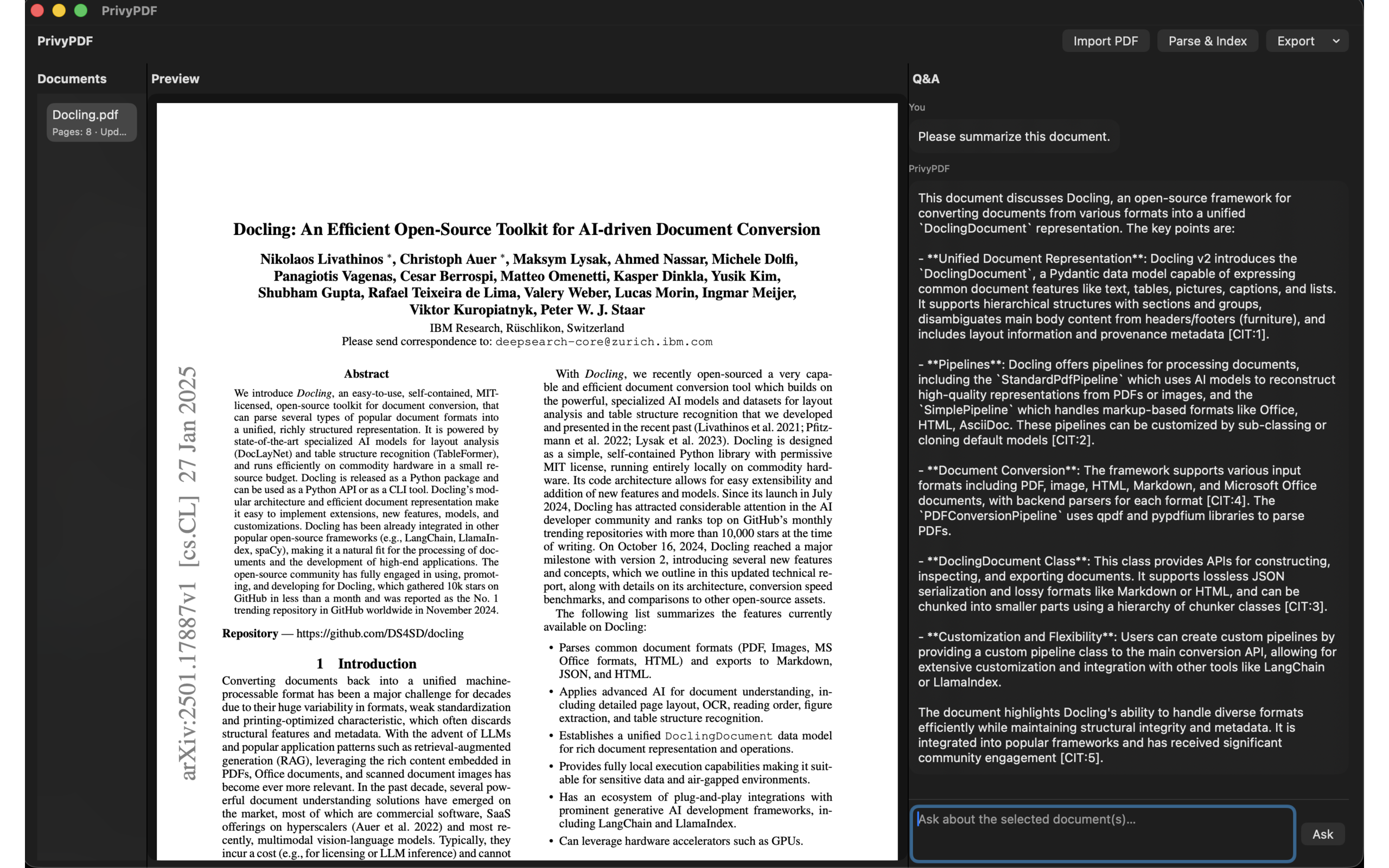

PrivyPDF

Local PDF Q&A with Ollama

Overview

Understand and chat with your PDFs—privately.

PrivyPDF is a local-first macOS app that turns your PDFs into a searchable, question-answerable knowledge base. All processing runs on your Mac. Your documents and embeddings stay in your App Sandbox; no data is sent to the cloud. Think of it as your local knowledge asset entry and a PDF-dedicated copilot.

How it works

- Import — Add PDFs via Import or drag-and-drop

- Parse & Index — Extract text and build a local vector index using your own Ollama server

- Ask — Type questions in natural language and get answers with source citations; responses stream in as they’re generated

- Jump — Tap a citation to jump to the exact page (and region) in the PDF preview

- Export — Export document text as Markdown or JSON for use elsewhere

Assistant replies support Markdown (bold, lists, code, and more). Resize the Documents or Q&A panels to focus on the PDF preview.

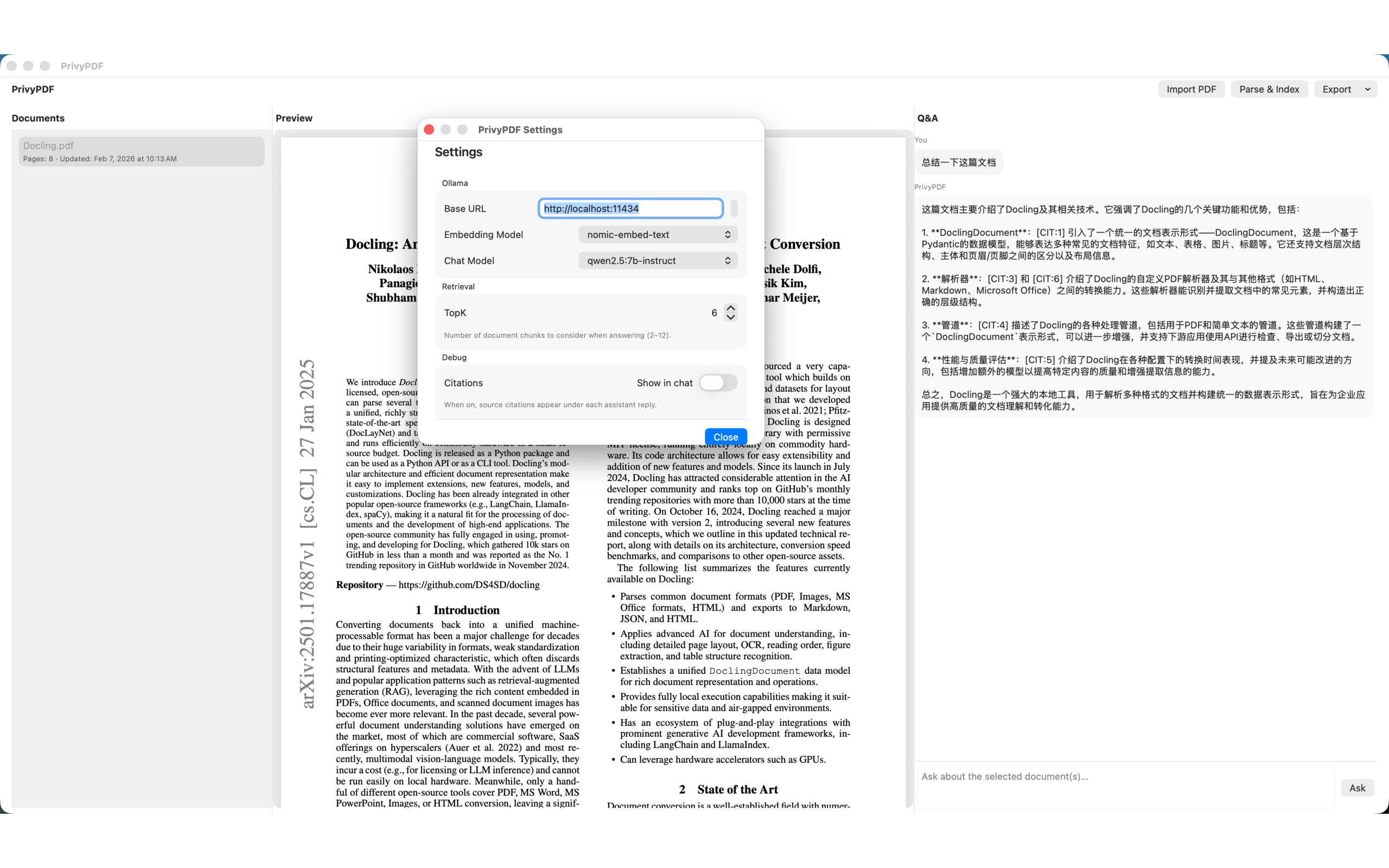

Privacy by design

PDFs and indexes are stored only in the app's sandbox. AI runs

via Ollama on http://localhost:11434. The app does not ship or download model weights. No account,

no telemetry, no external servers for your documents.

Requirements

- macOS 14.6 or later

- Ollama — Installed and running locally, with

at least one embedding model (e.g.

qwen3-embedding:0.6bornomic-embed-text) and one chat model (e.g.qwen2.5:7b-instruct). Configure the base URL and models in PrivyPDF → Settings. Download at ollama.ai

Who it's for

- Developers and engineers who want to query technical docs offline

- Researchers and knowledge workers who need citations and local control

- Enterprise knowledge workers and anyone who values privacy and wants a PDF copilot that never uploads their data

Screenshots